Artificial Intelligence is a powerful capability within our platform. It can accelerate test creation, simplify complex logic, reduce maintenance effort, and help you validate dynamic content that would otherwise require advanced scripting.

However, like any powerful tool, AI should be used thoughtfully.

This article outlines best practices to help you maximize value from AI while maintaining stability, predictability, and long-term maintainability in your automated tests.

1. Use AI When It Adds Clear Value

Our platform includes powerful built-in automation capabilities designed specifically for ERP and cloud applications. These capabilities are:

Deterministic

Predictable

Easier to debug

More stable over time

Recommendation:

Always prefer built-in, structured automation steps when they can achieve the required outcome.

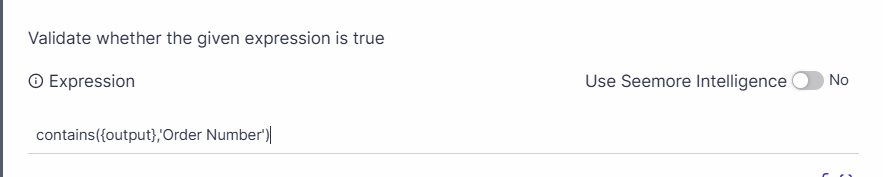

For example, you can use functions and parameters to enter or validate dynamic text:

When to Prefer Built-in Capabilities

Use native steps and predefined logic for:

Field validations

Data comparisons

Structured UI interactions

These options are optimized for automation reliability and long-term maintenance.

When AI Is the Right Choice

AI is best used when:

The expected result is semantic rather than exact

The application output varies but should convey the same meaning

Complex interpretation or transformation is required

A standard automation step would require heavy customization

Examples include:

Comparing texts or images with meaning-based similarity

Simplifying complex logic

Use AI to simplify complexity — not to replace reliable built-in steps.

The strongest and most recommended pattern would be to combine the AI and built-in capabilities and wrap AI usage with deterministic validation where possible. This approach provides both flexibility and stability.

2. Treat AI as Adaptive, Not Deterministic

Unlike predefined automation steps, AI responses may:

Vary slightly between executions

Improve or shift over time

Be influenced by prompt wording

This flexibility is powerful — but it also means AI is not inherently deterministic.

Best Practice

Before promoting a test to production:

Execute it multiple times during initial implementation

Run it across different environments if relevant

Confirm consistent and reliable results over time and after tool version upgrades

If results vary unexpectedly, refine the prompt or consider whether a built-in capability would be more appropriate.

3. Write Clear, Structured Prompts

The quality of AI output depends heavily on the prompt.

Tips for Effective Prompts

Be specific about what you expect

Define constraints clearly

Avoid ambiguity

Provide context when necessary

Specify the format of the expected answer if relevant

Example

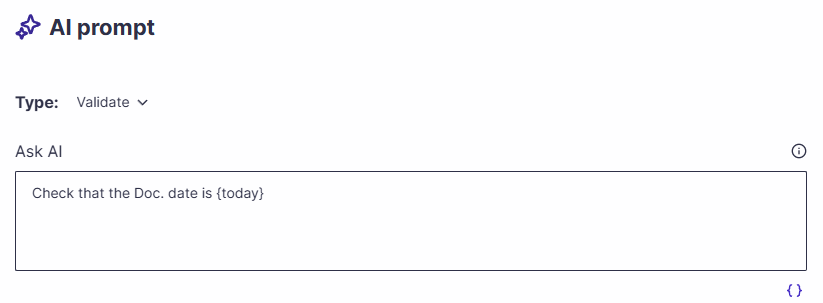

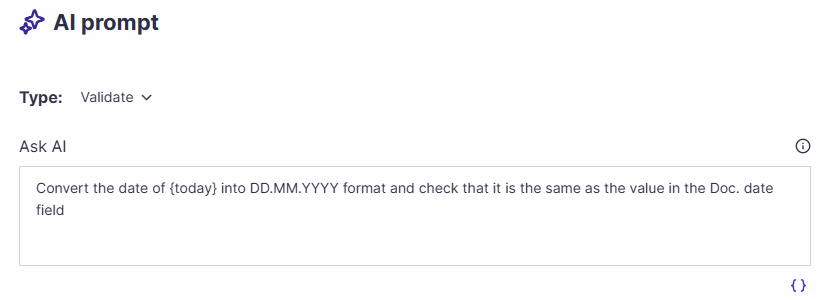

Less effective:

More effective:

Small refinements in wording can significantly improve consistency.

If the result is not what you expected:

Adjust phrasing slightly

Clarify the expected format

Narrow the scope of interpretation

Prompt tuning is a normal and expected part of using AI effectively. AI improves outcomes when guided clearly.

4. Keep AI Logic Transparent and Maintainable

For long-term maintainability:

Where applicable, document why AI was used and the intention of the prompt

Avoid overly complex, multi-purpose prompts

Keep AI steps focused and narrow in scope

Future maintainers should understand:

What the AI step is validating

Why AI was chosen instead of a standard step

What is the expected AI response

5. Troubleshooting AI-Based Steps

If an AI step behaves unexpectedly:

Re-run the test to confirm consistency.

Review the prompt for ambiguity

Simplify the request.

Consider whether a built-in capability is more appropriate.

Remember: AI is a tool to simplify automation — not to replace good automation design.

Summary

AI is a powerful accelerator within our codeless test automation platform. It can be applied to tasks that might not otherwise be feasible but often comes with reduced predictability in the outcomes.

Used correctly, it can:

Simplify complex validations

Reduce maintenance effort

Enable semantic and flexible verification

Improve automation coverage

However:

Built-in capabilities should always be your first choice.

AI should be used when it clearly adds value.

Prompts may require refinement.

Results must be validated for consistency.

Stability should always take priority over novelty.

By applying these best practices, you can confidently leverage AI while maintaining a robust, scalable, and reliable automation suite.